This article originally appeared in Game Developer Magazine, December 2006.

OPTIMIZING ASSET PROCESSING

The fundamental building block of any game asset pipeline is the asset processing tool. An asset processing tool is a program or piece of code that takes data in one format and performs some operations on it, such as converting it into a target specific format, or performing some calculation, such as lighting or compression. This article discusses the performance issues with these tools, and gives some ideas for optimization with a focus on minimizing I/O.

THE UGLY SISTER

Asset conversion tools are too often neglected during development. Since they are usually well specified and discreet pieces of code, they can be easily tasked to junior programmers. It is generally easy for any programmer to create a tool that works to a simple specification, and at the start of a project the performance of the tool is not so important, as the size of the data involved is generally small and the focus is simply on getting things up and running.

However, towards the end of the project, the production department often realizes that a large amount of time is being wasted in waiting for these tools to complete their tasks. The accumulation of near-final game data and the more rapid iterations in the debugging and tweaking phase of the project make the speed of these tools be of paramount importance. Further time may be wasted in trying to optimize the tools at this late stage, and there is a significant risk of bugs being introduced into the asset pipeline (and the game), by making significant changes to processes and code during the testing phase.

Hence it is highly advisable to devote sufficient time to optimizing your asset pipeline at an early stage in development. The process of doing this should include the involvement of personnel who have advanced experience in the types of optimization skills needed. This early application of optimization is another example of what I call “Mature Optimization” (see Game Developer Magazine, January 2006). There are a limited number of man hours available in the development of a game. If you wait until the need for optimization becomes apparent, then you will already have wasted hundred of those man-hours.

THE NATURE OF THE DATA

Asset processing tools come in three flavors: converters, calculators and packers. Converters take data which is arranged in a particular set of data structures, and re-arrange it into another set of data structures which are often machine or engine specific. A good example here is an texture converter, which might take texture in .PNG format, and convert it to a form that can be directly loaded into the graphic memory of the target hardware.

Secondly we have asset calculators. These take an asset, or group of assets, and perform some set of calculations on them such as calculating lighting and shadows, or creating normal maps. Since these operations involve a lot of calculations, and several passes over the data, they typically take a lot longer than the asset conversion tools. Sometimes they take large assets, such as high resolution meshes, and produce smaller assets, such as displacement maps.

Thirdly we have asset packers. These take the individual assets and package them into data sets for use in particular instances in the game, generally without changing them much. This might involve simply gathering all the files used by one level of the game and arranging them into a WAD file. Or it might involve grouping files together in such a way that that streaming can be effectively performed when moving from one area of the game to another. Since the amount of data that is involved can be very large, the packing process can take a lot of time and be very resource intensive – requiring lots of memory and disk space, especially for final builds.

TWEAKING OPTIMIZATION

You may be surprised how often the simplest method of optimization is overlooked. Are you letting the content creators use the debug version of a tool? It’s a common mistake for junior programmers, but even the most experienced programmers sometimes overlook this simple step. So before you do anything, try turning the optimization settings on and off, and make sure that there is a noticeable speed difference. Then, in release mode, try tweaking some settings, such as “Optimize for speed” and “Optimize for size” . Depending on the nature of the data, and on the current hardware you are running the tools on, you might actually get faster code if you use “Optimize for size” . The optimal optimization setting can vary from tool to tool.

Be careful when testing the speed of your code when doing things like tweaking optimization settings. In a multi-tasking operating system like Windows XP, there is a lot going on, so your timings can vary a lot from one run to the next. Taking the average is not always a useful measure either, as it can be greatly skewed by random events. A more accurate way is to compare the lowest times of two different settings, as that will be closest to the “pure” run of your code.

PARALLELIZE YOUR CODE

Most PCs now have some kind of multi-core and/or hyper-threading. If your tools are written in the traditional mindset of a single processing thread, then you are wasting a significant amount of the silicon you paid for, as well as wasting the time of the artists and level designers as they wait for their assets to be converted.

Since the nature of asset data is generally large chunks of homogeneous data, such a lists of vertices and polygons, then it is generally very amenable to data level parallelization with worker threads, where the same code is run on multiple chunks of similar data concurrently, taking advantage of the cache. For details on this approach see my article “particle tuning” in Game Developer Magazine, April 2006.

TUNE YOUR MACHINES

Anti-virus software should be configured so that it does not scan the directories that your assets reside in, and also does not scan the actual tools. Poorly written anti-virus and other security tools can significantly impact the performance of a machine that does a lot of file operations. Try running a build both with and without the anti-virus software, and see if there is any difference. Consider removing the anti-virus software entirely.

If you are using any form of distributed “farm” of machines in the asset pipeline, then beware of any screensaver other than “Turn off monitor” . Some screensavers can use a significant chunk of processing power. You need to especially careful of this problem when repurposing a machine – as the previous user may have installed their favorite screen-saver, which does not kick in for several hours, and then slows that machine down to a crawl.

WRITE BAD CODE

In-house tools do not always need to be up to the same code standards as the code you use in your commercially released games. Sometime it is possible to get performance benefits by making certain dangerous assumptions about the data you are processing, and about the hardware it will be running on.

Instead of constantly allocating buffers as needed, try just allocating a “reasonable” chunk of memory as a general purpose buffer. If you’ve got debugging code, make sure you can switch it off. Beware of logging or other instrumenting functions, as they can end up taking more time than the code they are logging. If earlier stages in the pipeline are robust enough, then (very carefully) consider removing error and bounds checking from later stages if you can see they are a significant factor. If you’ve got a bunch of separate programs, consider bunching them together into one uber-tool to cut down on load times. All these are bad practices, but for their limited lifetime the risks may outweigh the rewards.

MINIMIZE I/O

Old programmers tend to write conversion tools using the standard C I/O functions: fopen, fread, fwrite, fclose, etc. The standard way of doing things is to open an input file and an output file, then read in chunks of data from the input file (with fread, or fgetc) , and write them to the output file (with fwrite or fputc).

This approach has the advantage of being simple, easy to understand, and easy to implement. It also uses very little memory So you quite often see tools written like this. The problem is that it’s insanely slow. It’s a hold-over from the (really) bad old days of computing, when processing large amount of data mean reading from one spool of tape, and writing to another.

Younger programmers will learn to use C++ I/O “streams” , which are intended to make it easy for data structures to be read and written into a binary format. But when used to read and write files, they still suffer from the same problems that our older C programmer has. It is still stuck in the same serial model of “read a bit – write a bit” that is excessively slow, and mostly unnecessary on modern hardware.

Unless you are doing things like encoding MPEG data, you will generally be dealing with files that are smaller than a few tens of megabytes. Most developers will now have a machine with at least a gigabyte of memory. If you are going to be processing the whole file a piece at a time, then there is no reason why you should not load the entire file into memory. Similarly, there is no reason why you should have to write your output file a few bytes at a time. Build the file in memory, and write it out all at once.

You might counter that that’s what the file cache is there for. It’s true, the OS will buffer reads and writes in memory, and very few of those reads or writes will actually cause physical disk access. But the overhead associated with using the OS to buffer your data versus simply storing it in a raw block of memory is very significant.

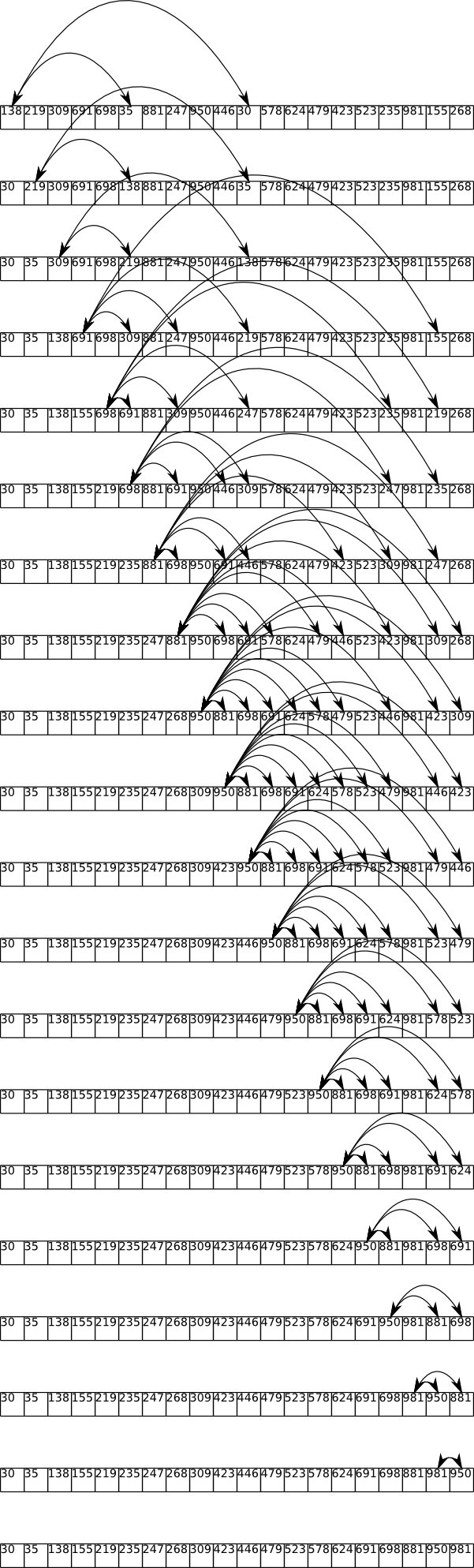

For example, listing 1 shows a very simple file conversion program that takes a file, and writes out a version of the file with all the zero bytes replaced with 0xFF. It’s simple for illustration purposes, but many file format converters do not do significantly more CPU work than this simple example.

Listing 1: Old fashioned file I/O

[source:cpp]

FILE *f_in = fopen(“IMAGE.JPG”,”rb”);

FILE *f_out = fopen(“IMAGE.BIN”,”wb”);

fseek(f_in,0,SEEK_END);

long size = ftell(f_in);

rewind(f_in);

for (int b = 0;b

char c = fgetc(f_in);

if (c == 0) c = 0xff;

fputc(c,f_out);

}

fclose(f_in);

fclose(f_out);

[/source]

Listing 2 shows the same program converted to read in the whole file into a buffer, process it, and write it out again. The code is slightly more complex, yet this version executes approximately ten times as fast as the version in Listing 1.

Listing 2: Reading the whole file into memory

[source:cpp]

FILE *f_in = fopen(“IMAGE.JPG”,”rb”);

if (f_in==NULL) exit (1);

fseek(f_in,0,SEEK_END);

long size = ftell(f_in);

rewind(f_in);

char* p_buffer = (char*) malloc (size);

fread (p_buffer,size,1,f_in);

fclose(f_in);

unsigned char *p= (unsigned char*)p_buffer;

for (int x=0;x

if (*p == 0) *p = 0xff;

FILE *f_out = fopen(“IMAGE.BIN”,”wb”);

fwrite(p_buffer,size,1,f_out);

fclose(f_out);

free(p_buffer);

[/source]

MEMORY MAPPED FILES

The use of serial I/O is a throwback to the days of limited memory and tape drives. But a combination of factors means it’s still useful to think of your file conversion as an essentially serial process. Firstly since file operations can proceed asynchronously, you can be processing data at the same time as it is being read in, and begin writing it out as soon as some is ready. Secondly: memory is slow, and processors are fast. This can lead us to think of normal random access memory as a just a very fast hard disk, with your processor’s cache memory as your actual working memory.

While you could write some complex multi-threaded code to take advantage of the asynchronous nature of file I/O, you can get the full advantages of both this and optimal cache usage by using Window’s memory mapped file functions to read in your files.

The process of memory mapping a file is really very simple. All you are doing is telling the OS that you want a file to appear as if it is already in memory. You can then process the file exactly as if you just loaded it yourself, and the OS will take care of making sure that the file data actually shows up as needed.

This gives you the advantage of both asynchronous IO, since you can immediately start processing once the first page of the file is loaded and the OS will take care of reading in the rest of the file as needed. It also makes best use of the memory cache, especially if you process the file in a serial manner. The act of memory mapping a file also ensures that there is the very minimum amount of moving data around. No buffers need to be allocated.

Listing 3 shows the same program converted to use memory mapped IO. Depending on the state of virtual memory and the file cache, this is several times faster than the “whole file” approach in listing 2. It looks annoyingly complex, but you only have to write it once. The amount of speed-up will depend on the nature of the data, the hardware and the size and architecture of your build pipeline.

Listing 3: Using memory mapped files

[source:cpp]

// Open the input file and memory map it

HANDLE hInFile = ::CreateFile(L”IMAGE.JPG”,

GENERIC_READ,FILE_SHARE_READ,NULL,OPEN_EXISTING,FILE_ATTRIBUTE_READONLY,NULL);

DWORD dwFileSize = ::GetFileSize(hInFile, NULL);

HANDLE hMappedInFile = ::CreateFileMapping(hInFile, NULL,PAGE_READONLY,0,0,NULL);

LPBYTE lpMapInAddress = (LPBYTE) ::MapViewOfFile(hMappedInFile,FILE_MAP_READ,0,0,0);

// Open the output file, and memory map it

// (Note we specify the size of the output file)

HANDLE hOutFile = ::CreateFile(L”IMAGE.BIN”,

GENERIC_WRITE | GENERIC_READ,0,NULL,CREATE_ALWAYS,FILE_ATTRIBUTE_NORMAL,NULL);

HANDLE hMappedOutFile = ::CreateFileMapping(hOutFile, NULL,PAGE_READWRITE,0,dwFileSize,NULL);

LPBYTE lpMapOutAddress = (LPBYTE) ::MapViewOfFile(hMappedOutFile,FILE_MAP_WRITE,0,0,0);

// Perform the translation

// Note there is no reading or writing, the OS takes care of that as needed

char *p_in=(char*)lpMapInAddress;

char* p_out = (char*)lpMapOutAddress;

for (int x=0;x

char c = *p_in;

if (c == 0) c = 0xff;

*p_out++ = c;

}

// Close the files

::CloseHandle(hMappedInFile);

::CloseHandle(hMappedOutFile);

::CloseHandle(hInFile);

::CloseHandle(hOutFile);

[/source]

RESOURCES

Noel Llopis, Optimizing the Content Pipeline, Game Developer Magazine, April 2004

http://www.convexhull.com/articles/gdmag_content_pipeline.pdf

Ben Carter, The Game Asset Pipeline: Managing Asset Processing, Gamasutra, Feb 21, 2005

http://www.gamasutra.com/features/20050221/carter_01.shtml